At Nesta, our work doesn't sit neatly in single projects with clear start and end points. We are mission-led: this means sustained, portfolio-wide efforts to shift systems, from reducing home carbon emissions, to halving obesity, to improving children's outcomes in the early years. Evaluating progress across a portfolio like this means asking a different question to standard project-level impact evaluation, and different questions require different tools. We don’t ask "did this intervention work?" We ask "are we actually moving the needle on the mission?".

A common approach to evaluating projects is through retrospective impact assessments. But this approach is designed around discrete interventions, and can’t tell us what we need to know in time to act on it. And the gold standard for evaluation, Randomised Controlled Trials (RCTs), while powerful in the right contexts, is rarely suited to the messy reality of systemic change, where multiple organisations are pulling in the same direction.

So we use theory-based evaluation as an impact assessment tool. Theory-based evaluation is an approach that shifts the question from whether change happened to how and why the change happened. Rather than treating evaluation as a post-hoc judgment of a project, theory-based evaluation can be used as a live management tool, one that helps us test our assumptions, trace our contribution to impact, and plan ahead for our future portfolio of work.

Over the past year, applying a theory-based evaluation framework across Nesta's portfolios of work has sharpened our thinking considerably. Here are four things we have learned that could help other mission-driven organisations do the same.

Where RCTs tell you whether something worked, theory-based evaluation tells you how and why, and that distinction matters enormously in complex, mission-led work. Theory-based evaluation turns the evaluation process from a "black box" into a navigable map of change, one that accounts for local context, overlapping interventions, and the many actors who shape a final result alongside you.

This is where theory-based evaluation becomes a practical tool. Rather than attempting to claim sole credit for an outcome (which in a complex system is rarely credible), theory-based evaluation draws on a diverse mix of quantitative and qualitative evidence to build a rigorous narrative of your role in the wider system. The goal is not to prove you caused the change, but to demonstrate that your activities were a necessary and significant part of it, that is a more honest and ultimately more useful standard of evidence for mission-driven work.

To track the progress of a broad and ambitious mission, you must first zoom in on the specific pathways to impact. A theory of change for missions serves as a portfolio, or systems-level assessment of what research and evidence tells us are the most important determinants of achieving the goal, as well as the external barriers to success. This mapping process should draw heavily on existing research syntheses to identify where evidence suggests policy action is most needed.

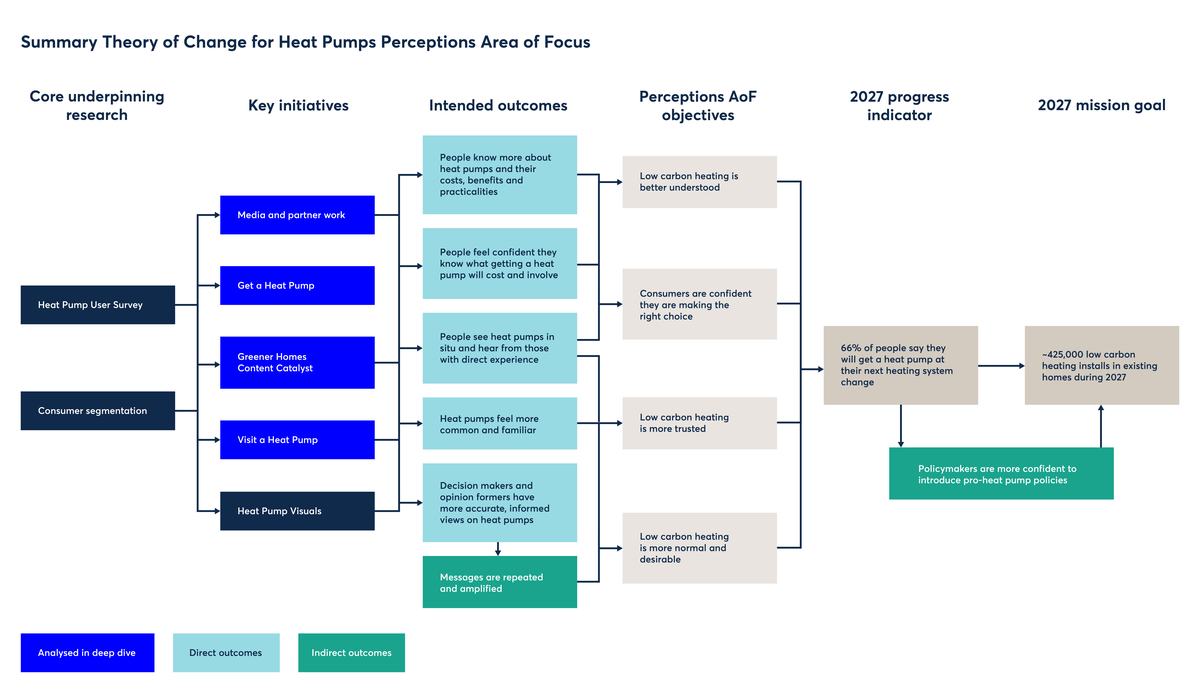

A practical approach is to divide this broad systems view into areas of focus, each targeting a specific barrier or aspect of the problem. For example, our work on home decarbonisation is articulated along four areas: heat pump perceptions, installer capacity, financial incentives and consumer journeys. This structured approach allows teams to test specific hypotheses regarding their logic, assumptions, and contributions without becoming overwhelmed by the daunting scale of the entire mission.

Figure 1: Visual representation of the heat pumps perceptions area of focus, breaking down how each research project and key initiative is hypothesised to impact the mission goal.

One of the most persistent misconceptions about theory-based evaluation is that applying it requires a large, dedicated resource commitment from the outset. In practice, theory-based evaluation exists on a spectrum. At its lightest, it might mean articulating the assumptions behind a single project and checking in on whether they hold as the work unfolds. At its most developed, theory-based evaluation involves a fully mapped theory of change across a portfolio of work, with systematic evidence collection against each causal link. Both are legitimate, and the right level of intensity depends on the maturity of the programme, the stakes involved, and the capacity available.

Starting small, even just making your logic explicit and revisiting it periodically, already shifts the culture towards learning rather than post-hoc justification. The important thing is to begin somewhere, and to treat the framework as something that grows with the work rather than a precondition for starting it.

For example, for our impact framework, a light touch theory-based evaluation with lower standards of evidence felt more proportional. This is because our main aim was assessing whether we have some confidence that our mission work is contributing to moving the dial on missions’ goals, and that our underlying assumptions are correct. On the other hand, we are adopting a much more robust theory-based evaluation approach to evaluate the Greener Homes Content Catalyst (GHCC), which aims to enable producers and content creators to tell stories about reducing domestic carbon emissions more creatively and more effectively.

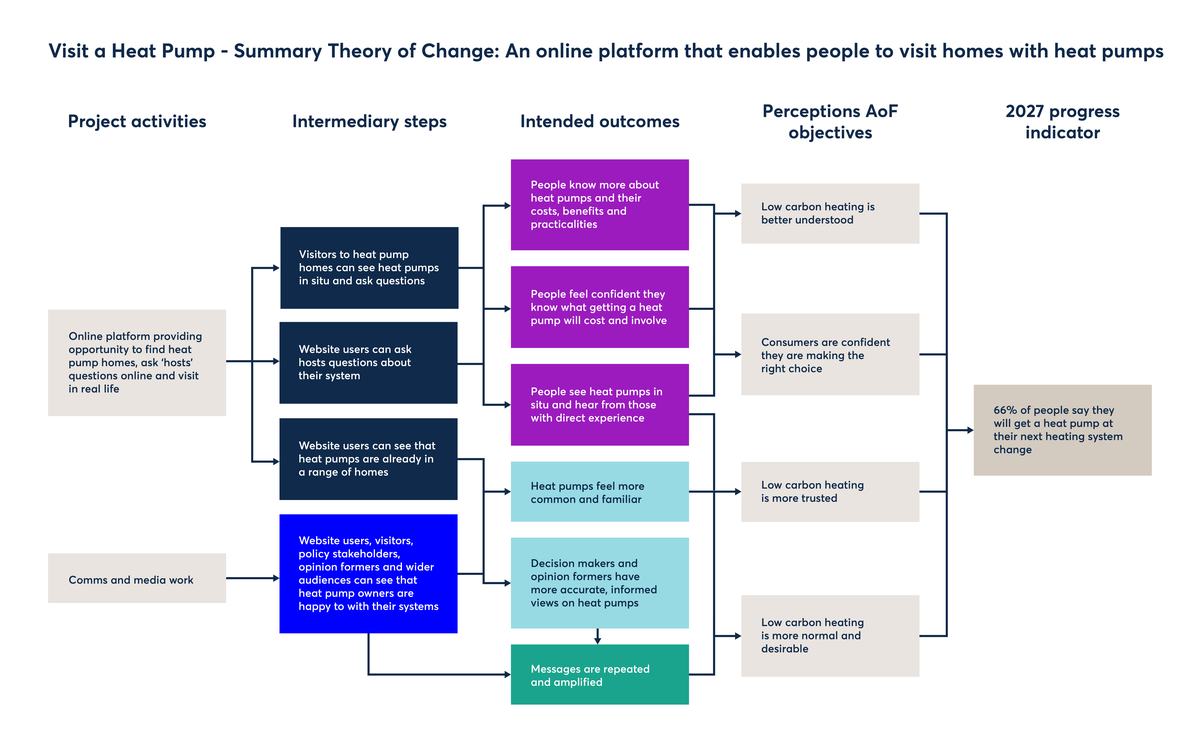

Figure 2: Visual representation of Visit a Heat Pump theory of change

Evaluation should function as a compass for the journey rather than a post-mortem of the destination. Traditional evaluations frequently deliver their results years after a programme has concluded, far too late for the findings to drive meaningful course correction. By consistently monitoring the causal links within our theory of change, we can identify where our logic is failing or where our initial assumptions have proved incorrect in a timely manner. This allows our mission teams to reallocate resources and refine their strategic approach to achieve mission driven goals while there is still time to improve outcomes.

For example, when reviewing our key assumptions behind Visit a Heat Pump and its theory of change (Fig 2), it emerged that the most likely pathway to scaled impact for the project was through communications and media coverage, rather than the direct effect of individual households taking part in Visit a Heat Pump. This led the team to increase efforts in gaining press coverage for the initiative as well as focusing on growing the number of hosts and visits.

These four insights represent a shift in how Nesta approaches its missions: from passive monitoring to active, theory-led learning. Taken together, they suggest a practical path for any mission-driven organisation willing to treat evaluation not as a bureaucratic requirement but as a genuine source of strategic intelligence. Theory-based evaluation methods are adaptable, have a lower entry point for use than first assumed, and the payoff is substantial: knowing not just what you achieved but why, and being able to act on that while it still matters.

If you are interested in knowing more about how to use theory-based evaluation to evaluate the contribution of your work towards your mission-led goals, please contact Martina Vojtkova via [email protected]